Why Ethical AI Needs Good UX

- ecw373

- Mar 29, 2023

- 3 min read

OpenAI's GPT-4 is currently winning the race for the most functional, enjoyable large language model (LLM) out there. Like GPT-3.5 before it, GPT-4 is being taken up very quickly and used in creative ways, sometimes without sufficient understanding of the product's limitations. This is partially due to poor UX writing.

At this point in time, I've heard of it being used to

produce meal plans and grocery lists,

edit non-native English speakers' writing,

explain a medical report in plain language when the patient had to wait a day to see a doctor,

as a search engine,

and more.

Some of these uses are better than others, as we shall see shortly.

While OpenAI has successfully instated certain safety measures in GPT-4, there are ongoing problems that OpenAI has not fully addressed, including sub-par UX writing. Here's what OpenAI did right and wrong this time:

What OpenAI Did Right This Time

More Publicly Available Research: OpenAI's release of GPT-4 was accompanied by more information about the AI, including academic papers estimating how many jobs would be affected by the technology, a system card that details safety risks and the work done to mitigate those risks, and more thorough data that indicates the abilities and limitations of the current system.

Fairly Robust Internal Safety Measures: It is also somewhat difficult to get GPT-4 to produce misinformation, at least in my short time playing around with it. I'm sure that people will find ways to jailbreak the AI, but the safety features within the AI engine itself are fairly robust and tend to prevent harmful content.

(I am immensely peeved at having to read "As an AI language model," at the beginning of all the safety responses the AI is trained to give.)

What OpenAI Did Wrong This Time

Ongoing Privacy Concerns: Only six days after launching GPT-4 on March 14th, a bug in an open-source library caused users to see the titles and potentially the first messages of other users' chats with the bot. Additionally, the last four digits and expiration dates of credit cards, first and last names, billing addresses, and email addresses of 1.2% of users were exposed during the nine hour window before the issue was resolved.

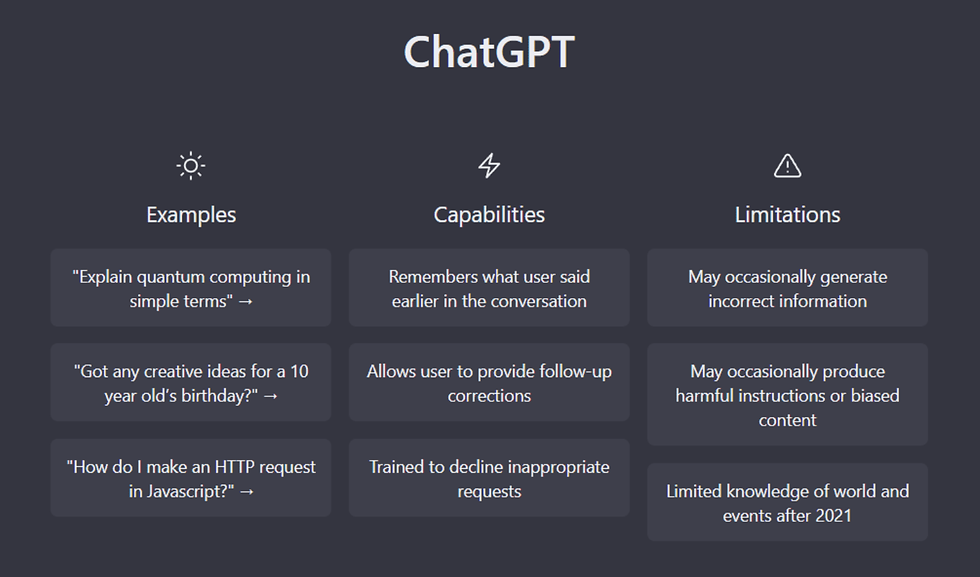

Sub-par UX Writing: The UX writing that introduces users to GPT-4 does not adequately communicate the limits of what it can do. While it does state that ChatGPT may "occasionally generate incorrect information" or "harmful instructions or biased content," these limitations are not highlighted and are easy for users to miss. It's also unclear from this writing how often the bot will make mistakes.

Once in the chat module, there is no UX writing to remind the user of privacy policies, accuracy limitations, or how user data will be stored. There is a link to Updates & FAQ, but the page it links to is hard to skim and does not answer common questions at a glance.

Why AI Needs Good UX

Even though GPT-4 is significantly better than GPT-3.5 in terms of reasoning ability and accuracy, I still caught at least five mistakes in my first week of occasional use.

With this error rate in mind, return to the initial examples of how people are already using GPT-4. How risky is each use case?

As a meal planner, GPT-4 is limited - I asked it to write me a recipe for Yukgaejang (a Korean spicy beef soup) without fernbrake, and it gave me a recipe with gosari, which is fernbrake. For those with severe allergies, these kinds of mistakes pose a significant risk.

Targeted editing is one of GPT-4's strong suits as far as I can tell, though it struggled with synthesizing material from my dissertation. Depending on the writing sample, using GPT-4 as an editor may be just fine. However, if there are sufficiently complex ideas or concepts that GPT-4 has not been trained to handle, it could lead to issues.

Using it to decode medical text before meeting with a doctor is significantly better than trying to decode medical documents without an expert to explain them, but the current error rates could still cause initial misunderstandings that could linger. If no experts are involved, this is a high risk case.

Finally, a fair number of people are uncritically using GPT as a search engine, seemingly not caring that it gets things wrong or just makes things up a fair portion of the time. Clearer UX writing would make users more aware of the error rate.

OpenAI needs to work on their UX to make the app more ethical and understandable to users, with a focus on communicating risks and limitations. Even the best safety standards internal to the AI itself are not enough to ensure an ethical user experience.

Good UX is still needed, even for AI.

Decorative Photo Credit: Lisa Yount

Comments